Why Feedback Voting Introduces Bias Into Your Product Decisions

Most Product teams adopt a customer feedback voting portal because it feels democratic. Customers log in, upvote features they want, and the most popular items float to the top. The reasoning is simple: if lots of people ask for something, it must be important. Except the data coming out of a voting system is warped before anyone even looks at it. The biases are structural, baked into the mechanics of how votes accumulate, who votes, and what gets seen. And when Product leaders use that data to justify roadmap decisions, they’re building on a foundation that looks like evidence but behaves like a popularity contest.

This matters because voting portals are everywhere. Tools like Canny, UserVoice, and Productboard have made it trivially easy to spin up a board and start collecting votes. The appeal is obvious, especially for teams drowning in Slack messages, support tickets, and sales call notes. A voting portal promises to cut through the noise. The problem is that it introduces a different kind of noise, one that’s harder to detect because it comes wrapped in numbers.

The Visibility Trap: Position Bias in Feedback Portals

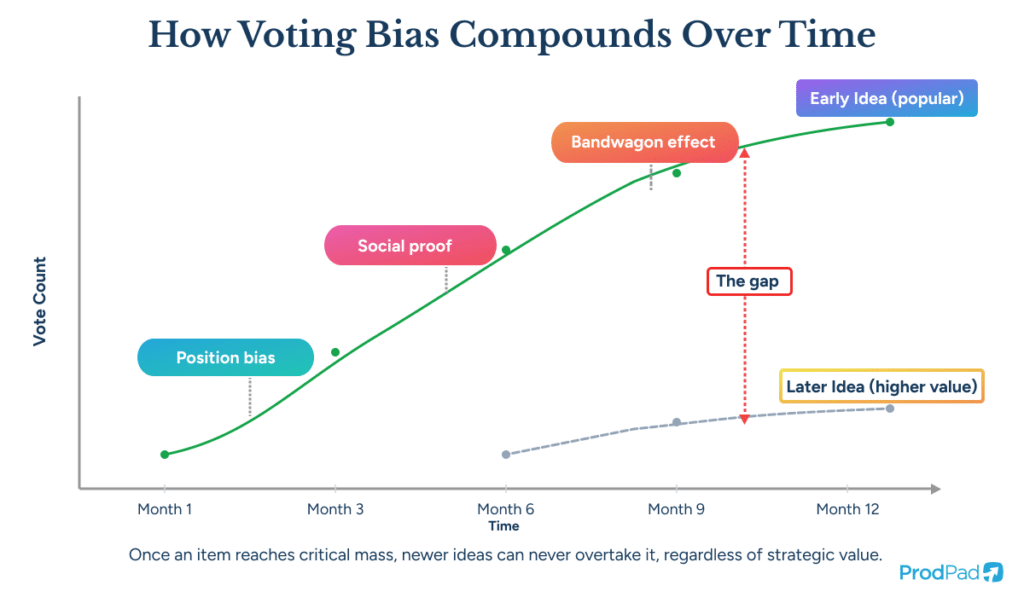

Every voting portal has a default sort order. Usually that’s “most popular” or “most votes.” The items at the top of the list get more eyeballs, and more eyeballs produce more votes. Items lower on the list get fewer views and therefore fewer votes, regardless of how important they are. This is a classic position bias effect, and it compounds over time.

Early movers accumulate disproportionate support

An idea submitted in the first month of a portal’s existence has a structural advantage over one submitted six months later. The early idea has been accruing votes passively while newer, potentially better ideas never get the same exposure. Voting portals reward persistence of visibility, not quality of insight. We wrote about this problem on the ProdPad blog years ago, calling it the Pareto principle outcome that no Product Manager wants: ideas at the top get more votes simply because they’re more visible, while potentially great ideas at the bottom get pushed further down.

Existing framing constrains new thinking

When customers arrive at a voting board, they scan what’s already there. If someone has already articulated a feature request, other customers tend to pile onto that request rather than articulate their own version of the underlying problem. The board becomes a collection of specific solution proposals rather than a map of customer pain. This is a well-documented phenomenon in group decision-making research, and it’s exactly the kind of anchoring effect that good Product discovery is designed to counteract.

Still prioritizing by vote count? 🤔 Your roadmap might be telling you what’s popular, not what’s valuable. Read more: Why Product Teams Don’t Have a Prioritization Problem, They Have a Decision Confidence Problem

Who Actually Votes (And Who Doesn’t)

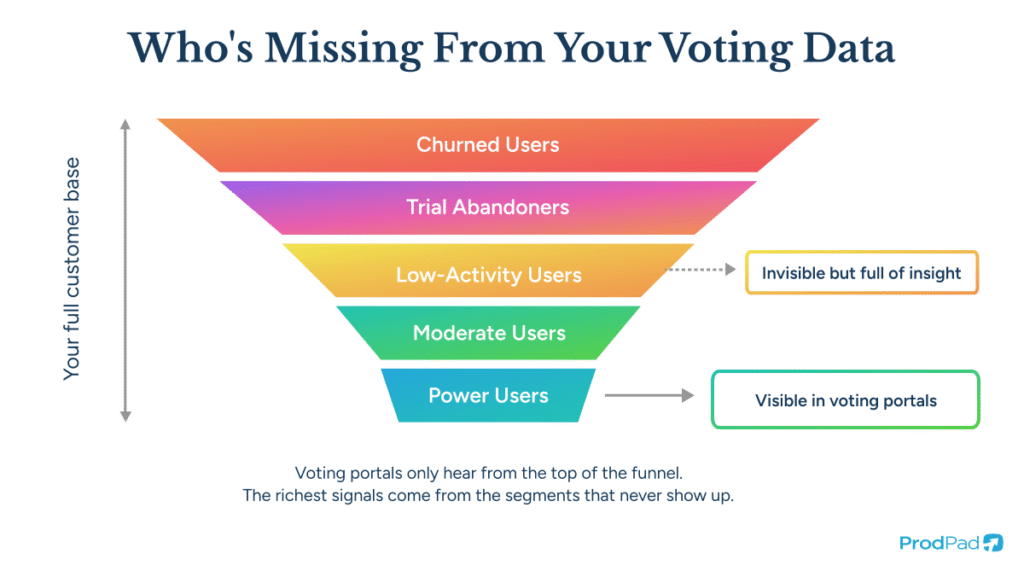

The composition of your voter pool is skewed from day one. Understanding who self-selects into voting, and who never participates, is essential to interpreting any signal the portal produces.

Power users dominate the conversation

The customers most likely to visit a feedback portal regularly are power users: people deeply embedded in your product who have strong opinions about what should change. These users are valuable, but they represent a specific segment with specific needs. When their voices dominate the board, the resulting data skews toward the needs of users who have already figured out your product, at the expense of users who are struggling with it.

This is a version of survivorship bias applied to Product feedback. You’re hearing from the survivors (people who stuck around long enough to become power users) and missing the people who churned, downgraded, or never activated in the first place. The customers who found your product confusing, who couldn’t get past onboarding, who quietly left after a trial period: they’re not logging into your feedback portal to vote. Their absence from the data is a signal, but a voting board can’t capture it.

The vocal minority shapes perception

Research on online feedback systems consistently shows that the people most motivated to participate hold extreme opinions, either very positive or very negative. The moderately satisfied majority tends to stay quiet. In a voting portal, this means the ideas that surface don’t represent what most of your customers care about; they represent what your most vocal segment cares about. That distinction matters enormously when you’re deciding where to invest Engineering time.

Revenue weighting is absent by default

A vote from a free-tier user and a vote from your largest enterprise account look identical on a voting board. Most portals don’t attach any business context to votes. So a feature request from 200 free users can outweigh a request from 5 enterprise customers who collectively represent 40% of your ARR. The numbers say one thing; the business reality says another.

Anchoring to Solutions Instead of Problems

The deepest flaw in feedback voting is structural, not statistical. Voting portals are built to collect and rank solution proposals (“add a dark mode,” “support CSV export,” “build a Salesforce integration”). Customers describe what they want built. The portal counts how many people agree with that description. And the Product team uses those counts to decide what to build.

The problem underneath the request stays hidden

Teresa Torres, the product discovery coach and author of Continuous Discovery Habits, has written extensively about the importance of separating the opportunity space from the solution space. When a customer says “I need a CSV export,” there’s an underlying job they’re trying to do: maybe they need to share data with a stakeholder who doesn’t have access to the product, or they need to combine data from multiple sources in a spreadsheet. Five different customers might vote for “CSV export” for five different reasons, and a different solution might serve all five of those reasons better.

A voting board collapses all of that context into a single number. Twenty votes for CSV export. Those twenty people might have the same problem, or they might have five different problems that all led them to the same proposed solution. The voting portal can’t tell you the difference. And the moment you treat “most votes” as a prioritization input, you’ve optimized for the wrong level of abstraction.

Problem-level prioritization produces better outcomes

I’ve written before about why the best Product teams prioritize at the problem level, not the idea level. Idea-level prioritization (which is what voting portals produce) is inherently bottom-up. It starts from a pool of proposed solutions and tries to figure out which solution to build next. Problem-level prioritization starts from strategic objectives and works down to which problems, when solved, would move the needle on those objectives. The right ideas and experiments to work on then fall into place within each problem space.

This is a fundamentally different approach, and it requires a fundamentally different relationship with customer feedback. Instead of asking “which feature do the most people want?” you’re asking “which customer problem, if solved, creates the most value for the business?” Those two questions produce very different roadmaps.

Prioritize problems, not features. See how problem-level prioritization changes the game: Prioritization Frameworks: Prioritize Problems, Not Ideas

The Bandwagon Effect and Social Proof Distortion

When vote counts are publicly visible (as they are on most portals by default), the numbers themselves influence future voting behavior. An idea with 500 votes looks important. A new visitor to the board sees that number and thinks, “Other people clearly want this, so it must matter.” They add their vote. The rich get richer.

Visible vote counts create momentum, not insight

This is a well-documented bandwagon effect. In political polling, researchers have long understood that publishing poll results influences subsequent voter behavior. The same dynamic plays out in feature voting. The number beside an item becomes a social signal, and customers respond to that signal rather than making an independent judgment about what they personally need.

Some tools have started offering options to hide vote counts or randomize the display order of items. These are steps in the right direction, but on most portals they’re optional settings layered on top of a system that was designed around public ranking. The underlying architecture still encourages customers to browse, compare, and pile on. Unless the entire portal experience is rebuilt around removing those dynamics (randomized display, limited item sets, hidden votes and comments), the biases persist in subtler forms.

Competitive intelligence is a free bonus for rivals

There’s also an underappreciated external risk. Public voting portals are a gift to your competitors. Jason Evanish has pointed out that user forums and voting boards are fertile ground for competitive intelligence. Your competitors can see exactly what your customers are unhappy about, what they’re requesting, and how many of them care about each gap. You’re effectively publishing a prioritized list of your product’s shortcomings for anyone to read.

The “But When?” Trap: Voting Boards Create Implicit Promises

When you publish a voting board, you’re sending a message to customers: “We want to hear what you think we should build.” Customers take that message seriously. They invest time articulating requests, they upvote things they care about, and then they wait. When the most-voted item doesn’t appear on the roadmap, customers feel ignored, and they’re right to feel that way. The portal set an implicit expectation that votes would influence decisions.

The expectation gap erodes trust

Product leaders end up in an impossible position. If they follow the votes, they’re building a product shaped by the biases described above. If they don’t follow the votes, customers who participated feel betrayed. Rich Mironov, product management coach and author of The Art of Product Management, has written at length about how product teams need to maintain strategic autonomy while staying responsive to customer input. A voting portal makes that balance harder, not easier, because it creates a public ledger of expectations that the Product team now has to manage.

Public comments turn portals into pressure campaigns

When customers can see each other’s votes, comments, and frustrations, a feedback portal stops functioning as an input channel and starts functioning as a public forum. Pile-ons happen. A frustrated customer writes a long comment about a missing feature, other customers add “+1” replies, and suddenly the Product team is managing a visible thread of public dissatisfaction.

That pressure, however understandable from the customer’s perspective, introduces a political bias into prioritization. The team feels compelled to respond to whatever is generating the most noise on the portal, even when the noise doesn’t reflect the most valuable use of their time. Sometimes there are very good reasons not to build what customers are asking for, whether because of technical constraints, strategic direction, or because the proposed solution wouldn’t actually solve the underlying problem. Public pressure makes it harder to hold that line.

The related tension shows up in roadmap communication, too. I invented the Now-Next-Later roadmap specifically to move away from date-based commitments and toward strategic time horizons. A voting board pulls in the opposite direction. It says: “Here’s what people want, ranked by demand. When are you going to deliver it?” That framing is the exact kind of commitment-trap that outcome-driven Product teams are trying to escape.

See the roadmap format that replaces false promises with strategic clarity in Why I Invented the Now-Next-Later Roadmap

What Good Feedback Systems Actually Do

Listing the biases inherent in voting doesn’t mean customer feedback should be ignored. The opposite is true. Customer feedback is one of the most valuable inputs a Product team has. The question is how to collect, organize, and interpret it in ways that minimize distortion and maximize strategic value.

Capture context, not just requests

Every piece of feedback is more useful when it comes with context: who said it, what they were trying to do, what segment they belong to, how much revenue they represent, and what problem they’re actually experiencing. When feedback flows in from support tickets, sales calls, Slack messages, NPS responses, and direct conversations, each piece carries some of that context naturally. A good feedback system preserves and surfaces that context rather than stripping it away.

ProdPad’s approach to customer feedback management is designed around this principle. Feedback from any channel, including Intercom, Slack, Salesforce, email, and branded feedback portals, gets linked to the idea or initiative it relates to, tagged with customer and segment data, and connected to the strategic objectives it maps against. The point is to turn qualitative signals into evidence that can inform prioritization, not to reduce feedback to a single number.

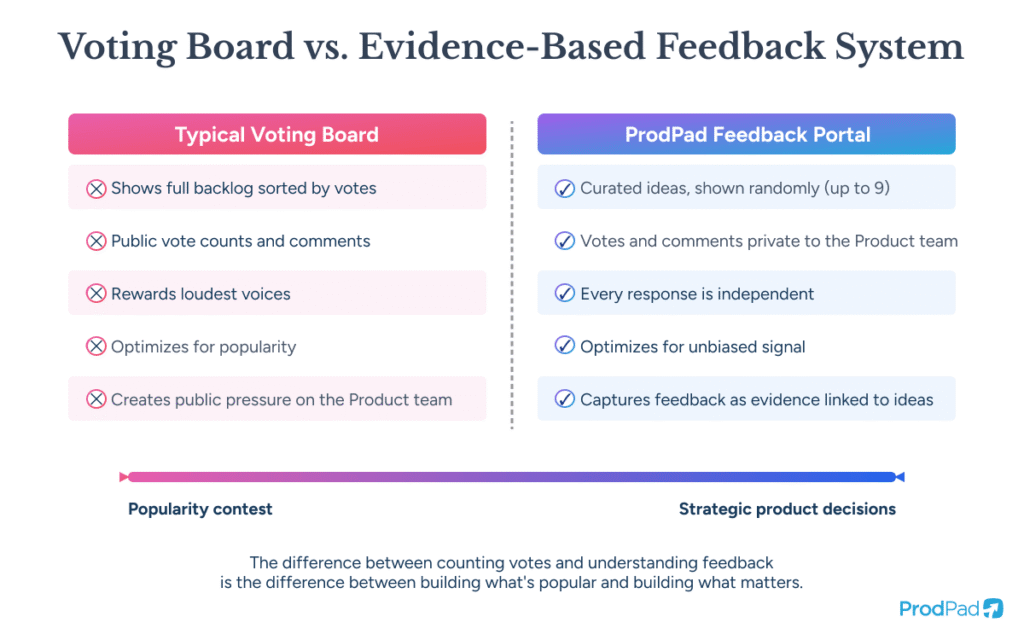

Design the portal to remove bias, not add it

ProdPad’s Customer Feedback Portal was built specifically to avoid every bias described in this article. The design choices are deliberate.

Ideas are hidden from the portal by default. The Product team selects which ideas to promote to the portal when they’re ready for customer input. This means customers aren’t browsing the entire backlog and piling onto whatever catches their eye first. The team controls what gets tested.

When a customer visits the portal, they see a random selection of up to nine ideas from the promoted pool. The selection changes, so no single idea sits at the top of the list accumulating votes through position bias. And because each visitor sees a different set, the system produces an unbiased aggregate signal over time rather than a popularity ranking distorted by display order. The goal is not to get every customer to evaluate the entire backlog. It’s to get an honest, unbiased sense check from the customers who show up.

Customers can upvote ideas and leave comments (which get captured in ProdPad as feedback), but those votes and comments are not visible to other customers. This eliminates the bandwagon effect entirely. No customer can see how many other people voted for an idea, so every response is independent. And because comments stay private, the portal never becomes a public discussion forum where customers air grievances or coordinate pressure campaigns about what they think is wrong with the product.

This design reflects a specific philosophy: feedback portals should help the Product team gather signal, not create a public popularity contest that constrains their decision-making.

Link feedback to problems, not solutions

When feedback is connected to ideas (which are themselves connected to roadmap initiatives and OKRs), the Product team can see patterns across customers and segments. Ten customers might each articulate a different feature request that all trace back to the same underlying problem. A voting board would show ten separate items with one vote each. A well-structured feedback system would show one problem area with ten supporting data points, a much more useful signal for prioritization.

Use feedback volume as one input among many

The number of customers mentioning a particular problem is useful context. So is the revenue those customers represent, the strategic importance of the segment they belong to, and the degree to which solving their problem advances the company’s objectives. Impact-versus-effort prioritization keeps the conversation grounded in business value rather than raw demand. The final decision is still a judgment call, made by humans in the context of strategy and evidence. Itamar Gilad, creator of the GIST framework and author of Evidence-Guided, has advocated for treating product decisions as bets that should be tested, not as commitments to be delivered. That framing is much harder to maintain when a voting board is telling you exactly what to build.

Your feedback is full of signal. You just need the right system to find it. See ProdPad’s Customer Feedback Portal.

The Organizational Dynamics That Make Voting Boards Stick

Knowing that voting boards introduce bias doesn’t automatically make them easy to remove. They persist because they serve organizational needs that go beyond product prioritization.

They give non-Product stakeholders a sense of control

Sales teams love voting boards because they can point to vote counts to justify feature requests. Executives love them because they provide a simple narrative: “Customers want X, so we should build X.” The simplicity is the appeal. A nuanced approach to feedback interpretation requires more trust in the Product team and more patience with ambiguity. Voting boards reduce that ambiguity to a number, and numbers feel safe.

The 2026 State of B2B Product Management report found that 40% of respondents ranked poor prioritization and decision-making discipline as a serious problem, with one respondent noting that leadership routinely overrides structured frameworks in favor of reactive decision-making. Voting boards feed directly into that pattern. They give anyone in the organization ammunition to challenge Product decisions by pointing to “what customers want,” even when the underlying data is distorted.

Removing a voting board requires replacing the function it serves

You can’t just turn off a voting portal and tell stakeholders to trust the Product team. You need to replace the visibility and accountability that the portal provided. That means giving stakeholders access to a transparent prioritization process, a roadmap they can understand, and a feedback system they can see working. When stakeholders can observe feedback flowing into ideas, ideas being evaluated against objectives, and initiatives appearing on a Now-Next-Later roadmap connected to business goals, the voting board becomes redundant. The system itself provides the transparency that the portal was supposed to deliver.

Voting Boards Measure Demand, Not Value

The most important distinction in all of this is between demand and value. A voting board measures demand: how many people asked for a thing. Value is a different calculation entirely. Value accounts for which customers are asking, what strategic objective the request maps to, how much effort the solution requires, what the opportunity cost of building it is, and whether the proposed solution actually addresses the underlying problem.

Product teams that build decision confidence based on evidence and strategy don’t need a popularity ranking to tell them what to build. They need a system that captures customer pain, preserves context, connects it to business goals, and supports the kind of judgment calls that good Product leadership requires. Scoring models like RICE have their uses as conversation starters, but the best teams use scoring to create a stake in the ground and judgment to make the final call.

The uncomfortable truth about feedback voting is that it optimizes for a metric (vote count) that has a weak relationship to the outcome most teams care about (building the right product for the right customers). The biases aren’t bugs in the system. They are the system. Position bias, survivorship bias, the bandwagon effect, and the conflation of solutions with problems are all predictable consequences of asking customers to vote on features. The alternative is harder. It requires capturing feedback from diverse channels, preserving the context around each piece, connecting that feedback to strategic objectives, and making prioritization decisions that balance customer input with business judgment. That’s more work than sorting a list by vote count. It’s also how products get built that customers actually need.