Finding Customer Success: Looking Beyond Metrics

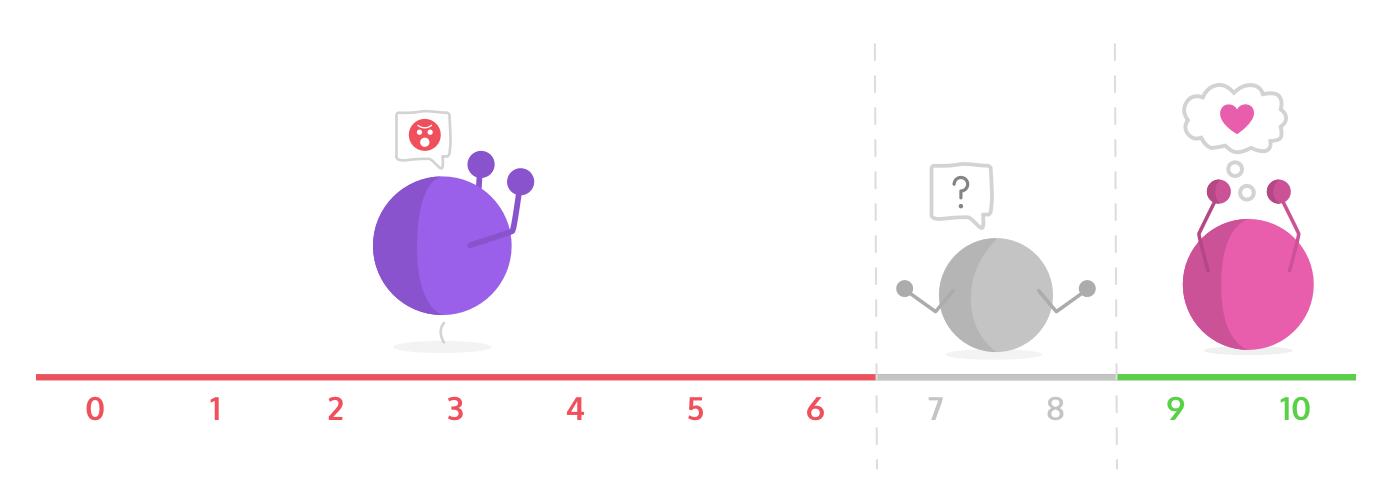

Tempting though it may be, you can’t rely just on metrics and data to tell you if your customers are happy and successful. There isn’t one true metric for success – so whether you look at NPS scores, CSAT, SLAs or whatever else, you won’t get a holistic view of customer success.

You might be catching a customer at the wrong time, ignoring at-risk customers, gathering the wrong data, using data in the wrong way, or holding on to numbers without understanding their meaning, there are so many areas where a reliance solely on data can lead you down the wrong path. In the words of economist Ronald Coase: “Torture the data and it will confess to anything.”

We believe you should create meaningful relationships with your users rather than just look to score their interactions with your company.

What we don’t track and why it doesn’t work for us

1.Net Promoter Score (NPS)

Net Promoter Score is a metric that has often been discredited, but it’s still embedded as gospel in many companies. It’s just a self-report number, without any context. UX designer Jared Spool thoroughly debunks NPS in this blog from last year, and it’s well worth a read. He says:“People who believe in NPS believe in something that doesn’t actually do what they want. NPS scores are the equivalent of a daily horoscope. There’s no science here, just faith.”

We see the issues with NPS as follows:

- It’s asking the wrong question: “What do you mean, would I recommend this (b2b) tool to my friends or family… probably not! My friends and family don’t worry about product management and I don’t have many friends in this field… what’s the point in recommending it?”

- Some people just don’t recommend things much: NPS specifically asks customers if they would recommend your product, and some people don’t recommend things even if they’re perfectly happy customers. ¯\_(ツ)_/¯

- You get false positives: Just because someone recommends it doesn’t mean they are satisfied. And even if they give a low score, doesn’t mean they are at risk or anything bad. Just less likely to recommend things, or might have something to vent about.

- Your most at-risk customers won’t bother responding: People who don’t reply to NPS are your most at-risk customers… and with typically low reply rates (even with a good delivery system)

- It can be gamed: NPS can be gamed by asking only specific customers.

- Scores vary regionally: For cultural reasons NPS scores differ from US and UK and probably other markets.

There is nothing wrong with checking in with your customers, and that’s what NPS is for. But it’s very easy to gamify NPS results and there are other ways to engage with your users that lead to much longer-lasting success. We’ll get to that later…

2. Customer SATisfaction (CSAT)

CSAT asks customers to rate their support experience. But the numbers are always muddled by customers who leave a negative review because they’re frustrated with the product rather than the support. Bear in mind that CSAT only measures a single interaction, and the response to that interaction may be biased. The agent may have given a helpful response, but if it wasn’t response the customer was looking for, it could be marked as a bad experience. Even a good interaction can be perceived as negative simply because of outside forces.

There’s a story behind any poor ratings – there’s a product issue that needs to be looked at – but CSAT scores hide them and penalize your support rating.

3. SLAs (Service Level Agreements)

SLAs track the performance of your support when solving a problem. But it’s important to understand the data within context.

For example, if you see a high handle time and you don’t focus on why it took a long time, then the data can be misinterpreted.

There could be a number of explanations for high handle times, for example:

- Was the issue overly complicated and hard to explain?

- Was the user too busy to jump on a call and didn’t have the time to troubleshoot an issue with you?

- Was it actually more convenient for the user to go through an email back-and-forth rather than get on the phone?

And what about the time of day that tickets come in? We’re a small team at ProdPad, so we need to plan our support and shift times so we get the maximum amount of coverage. All our clients can tell you we respond to every single ticket, and we’re always available no matter what!

If you get too many tickets, it doesn’t mean you necessarily need more people on your team, maybe you need to make product adjustments or adjust the documentation. If you’re getting too few tickets, it doesn’t mean support is doing great, it maybe just means people don’t understand how to reach support.

It’s important to understand the data within context. You can’t just look at the data and think you’re doing well because the numbers are low or high.

What actually works for us?

There are so many touch points where a customer can engage with your company. This might start with an exploratory visit to our website to see if we’re what they’re looking for, through to sign-up for a trial, on to being a long-term customer perhaps looking to upgrade their plan. So while we need to be clear that we’re here to help, we also need to give customers as many opportunities for self-learning as possible.

So it’s important to look at all the ways in which customers come to you with problems, because then you can create ways of dealing with their issues that are specific to their point on the customer lifecycle.

The way we onboard users through our emails is based on their in-app behavior (you can read more about this here). If we focused on a single metric we wouldn’t be looking at where in the funnel customers were getting stuck.

Now let’s look at the ways we have found to improve customer success when using ProdPad.

Invest in Education

Education is the key to customer success, and at ProdPad we’re very confident in what we provide. We understand that everyone learns differently so we’ve invested a lot of time in creating different ways for a customer to learn about how to use ProdPad:-

- We have a set of two-minute masterclass videos that help you get the hang of basic functionality.

- We blog about best practices.

- We hold ProdPad demos twice a month where we take questions on product management too.

- We also maintain a help center with a super smart Algolia search that tells us what our customers are searching for so we know where we need to beef it up.

- We give best-practice webinars that rack up as many as 500 attendees each time.

Create a Community

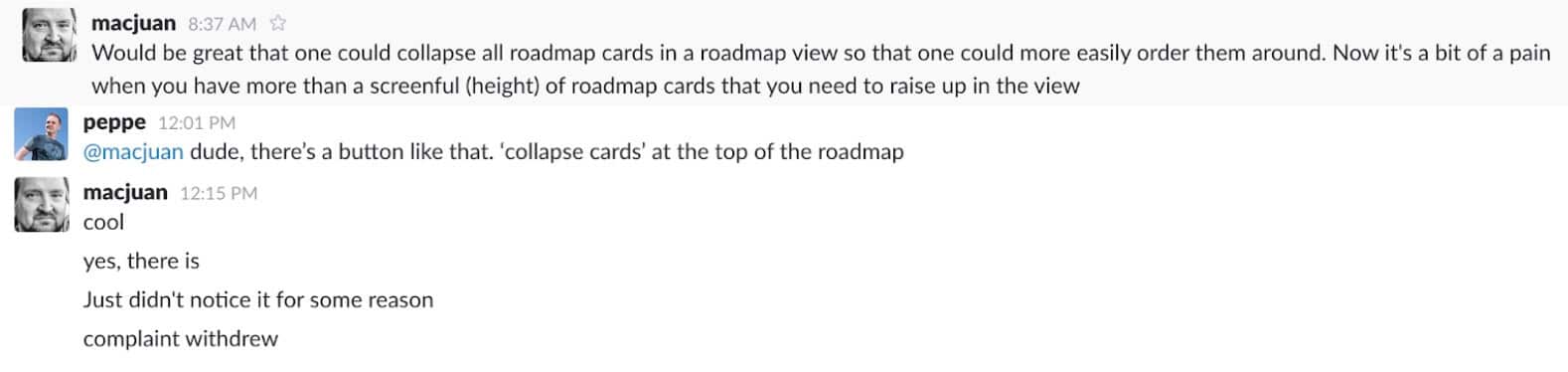

One of our core values at ProdPad is transparency. We’ve created a community in Slack where we can speak directly with our customers. This facilitates conversations, unlocks feedback, ramps up engagement, and help us to understand what our customers are looking for.

It has also helped our users to help each other!

There’s much to be said for the power of direct contact in an informal environment. Our Slack community means we can:

- Give users release notes hot off the presses

- Handle any bugs that pop up

- Create beta testing groups

- Ask users about their problems

We take care of the ProdPad Slack channel from 6am-10pm UK time, but the culture of the community is such that our members will happily jump in and answer questions when we’re not around.

If you’re looking for help to set up your own community, we recently hosted a webinar on the topic and you can watch it here.

Find the Root of the Problem

When customers message us for help or with a piece of feedback, we never tell them “no”. We work with them to understand what they’re trying to do so we can find an appropriate solution or workaround.

We use ProdPad to capture and sort our customer feedback (of course we do!) As our CEO Janna says in our webinar on how to use ProdPad and Trello together: “Within ProdPad we have an entire section called customer feedback where you can capture what individual users have asked for, then link the feedback to different ideas. What this allows you to do is understand the context of why someone asked for a feature. For example you could have a group of customers asking for colour coding on tabs and another set asking for hierarchy in tags – at this point it will give you the context that people simply want a way to organize their tags better.”

So you shouldn’t be saying “no”, but instead listening to what people want and then finding a way to solve it. The solution might not be the one that was explicitly requested, but you’ve spent the time finding the context of what they wanted and then let your product managers fix the problem.

It’s also important to remember not everything has to be solved instantly. When we ship a feature that has been requested through customer feedback, I get back to who asked for it. (This has actually brought customers back who left while we were building it!)

What we do track

Of course, that’s not to say that there isn’t a place for some metrics and monitoring of trends, so at ProdPad we track trends in onboarding, feedback, product analytics and relative health.

Onboarding

This gives us an understanding of why people are finding us and helps us with how we can show value to our users. Our onboarding has been personalized and gamified. By tracking time to value and time to subscription we get an understanding how long it takes for our users to get onboard and how we can shorten that time while providing value.

Feedback

If we don’t take in feedback we lose sight of who we’re building things for. We’ve written before about how to deal with negative customer feedback, and it’s better to focus on training your team on how to manage negativity, rather than stress them out because they got negative feedback in the first place. We look at and answer every single feedback request, no matter how silly. Yes, even those that just say ‘Hi’ or ‘asdasda’ – Peter, I’m looking at you!

Product Analytics

Product analytics give us an understanding of where users get stuck and where there’s room for improvement. We built our own internal tracking, and plugged it into Bugsnag and FullStory. When someone has an issue, it is flagged automatically, so I’m told what happened and where it happened. It allows the team to be proactive.

Health Score

We also track a relative health score. By “relative” I mean it’s not always an exact science! This gives us an understanding of usage trends and an indication of whether users are getting stuck or changing how they use the app.

We know there are certain “power features” that keep people engaged, like creating ideas, feedback and pushing to development. If there is a drop in those items, or a drop in page views, we know something is up. I know then that I need to check the user’s activity and make an appropriate decision as to whether or not it’s time to reach out (they could be doing other things, like commenting on issues for example and adding priority scoring to their ideas, which are considered medium or low usage features).

What happens when you talk to your customers more

We know our support is working well without using classic customer success metrics because type of questions we get asked has shifted from “How do I…” basics to more complicated implementation and best-practice questions.

This tells us that:

- Our help center is tackling and answering those basic questions

- Our customers are becoming more advanced and therefore asking more advanced questions

It means that we’re having a discussion, so that our customers are talking to us, and we can teach them new things. Discussions also unlock feedback and I get insights from these discussions that I can pass on to the product team.

Making our customers badass users is part of our mission.

Our goal is to talk MORE to our customers, not less.

Is this scalable?

Scalability and growth is always a concern.

It’s easy to look at what big companies are doing and think that it’s the only way. I’ve worked at some big companies in the past and the truth is that surveys, NPS, performance surveys, response times and so on, have less to do with the success of the customer and more to do with vanity metrics that are easy to relay to the board. They miss a big chunk of the context of where that data comes from.

Creating context comes from real life-interactions. The time spent looking into ways to create more opportunities is a lot more valuable to us and our customers than purely tracking what they’re doing.

As a happy side effect, we’ve been able to take these relationships offline, and we’ve become good friends with many of our clients. That relationship has become invaluable, for us and for them.

Our customer success relies on more than just metrics and numbers. It relies on us being human, connecting with people, being honest and transparent, and fostering relationships. We’re building software, yes, but we’re building software for real people, and if we want to be successful, we have to understand who we’re building for.

Moving forward, as we grow, I don’t think we’ll ever go back to measuring things like CSAT. Just because we have more people doesn’t mean that the quality of service should go down. I’d rather our future support team know how to create personal relationships with our clients and to get to know them as people, not as robots.

Sign up to our monthly newsletter, The Outcome.

You’ll get all our exclusive tips, tricks and handy resources sent straight to your inbox.