AI Made Your Product Team Faster. It Didn’t Make Them Better

Every product org I talk to has an AI story now. Specs written in seconds. Roadmaps populated overnight. Customer feedback summarized before the PM’s coffee is cool enough to drink. The speed is real. The progress is mostly imaginary.

I had a conversation with David Pereira recently that crystallized something I’ve been circling for months. David, whose book Untrapping Product Teams is one of the sharpest diagnoses of product dysfunction out there, put it bluntly: the teams that were already building the wrong things are now building the wrong things faster. AI has become a force multiplier for motion, and motion is getting mistaken for progress at every level of the organization.

This is a problem that sits squarely on the shoulders of senior Product leaders and founders. Teams didn’t choose to skip problem framing. The systems they operate in did.

The Return of Capability-Led Thinking

Product organizations have been fighting capability-led thinking for more than a decade. The idea that “we can build it, therefore we should build it” has been called out by Melissa Perri in Escaping the Build Trap, by Marty Cagan in Empowered, and by every credible Product thinker writing today. And yet the pattern keeps returning. It keeps returning because the conditions that produce it never fully change.

Why the Pattern Persists

Capability-led thinking thrives wherever there’s a mismatch between what an organization can produce and what it has permission (or patience) to learn. When new technology arrives, that mismatch widens. AI has widened it dramatically.

Consider what’s happened. Before AI tooling became common, there was a natural friction in the product process. Writing a spec took time. Synthesizing feedback took time. Scoping a solution took time. That friction was often annoying, but it also created space for thinking. It forced teams to sit with ambiguity a little longer, to ask whether the problem was understood well enough to justify the effort of writing the solution down.

AI has collapsed that friction. A PM can go from a vague customer complaint to a fully-formed spec in under an hour. The feature factory dynamic that John Cutler diagnosed years ago now operates at a speed that makes the old version look quaint.

The issue isn’t that AI generates bad outputs. Often the outputs are well-structured and thorough. The issue is that the inputs were never interrogated. The problem was never framed. The customer need was assumed, not validated. And so the beautifully formatted spec describes a solution to a problem that may not exist, or may not be the problem that matters most.

Speed Without Direction Compounds Waste

Itamar Gilad, creator of the GIST framework and author of Evidence-Guided, has been writing for years about how opinion-based decision-making dominates product organizations, even ones that claim to be data-driven. His Confidence Meter makes visible something most teams would rather not acknowledge: the gap between how certain they feel about an idea and how much actual evidence supports it.

AI widens that gap. When a tool produces a polished artifact, the artifact itself generates confidence. A well-written spec looks like a well-researched spec. A cleanly summarized set of customer insights looks like deep understanding. Leadership sees the output and assumes the thinking happened. The team ships, measures nothing meaningful, and moves on.The compounding effect is significant. Every cycle that skips problem framing adds more features to a product without adding clarity about what the product is for. Over time, the product becomes harder to reason about, harder to maintain, and harder to sell. Technical debt accumulates alongside strategic debt, and neither shows up on a dashboard until it’s expensive to fix.

Is your roadmap measuring motion or progress? Explore how outcome-based roadmaps keep your team focused on the outcomes that matter, not the features that feel productive.

The Cost of Skipping Problem Understanding

There’s a phrase that circulates in product leadership circles: “fall in love with the problem, not the solution.” It has become so common that it risks losing its meaning. But the underlying principle is structural, not sentimental. Organizations that skip problem understanding don’t just build the wrong features. They build the wrong operating model.

What Gets Lost When Problem Framing Disappears

Problem framing is the work of defining what you’re actually trying to change for customers and the business, and understanding it well enough to recognize a good solution when you find one. It includes research, yes. But it also includes the harder work of building shared understanding across Product, Design, and Engineering about what “done” looks like and how you’ll know if you got there.

When AI tools generate solutions quickly, the temptation is to skip that shared understanding work entirely. After all, the spec is already written. The user stories are drafted. Engineering can start. And this is where the real cost appears: not in the quality of any single feature, but in the erosion of the decision-making muscle across the team.

Teresa Torres’ continuous discovery habits model is built on the idea that product teams need to be making frequent, small bets informed by direct customer contact. When AI-generated outputs replace that direct contact, teams lose the context that makes those bets meaningful. They stop asking “why this problem?” and start asking “how fast can we ship this solution?”

David Pereira talks about this in terms of traps. Teams get trapped in coordinative workflows where the PM hands off a spec, Engineering builds it, and nobody owns the outcome. AI accelerates the handoff without addressing the workflow’s fundamental weakness: nobody paused to ask whether the thing being handed off was the right thing.

The Incentive Problem Underneath

The deeper issue is that most product organizations still reward speed and volume. Quarterly planning cycles favor teams that can show a full backlog of scoped work. Roadmap reviews favor teams that can demonstrate throughput. Annual performance reviews favor PMs who shipped a lot.

None of these incentives reward the team that spent three weeks in discovery and came back with the insight that the highest-value opportunity was something nobody had considered. None of them reward the PM who killed a project early because the evidence didn’t support continuing.The result is a system that penalizes depth and rewards surface area. AI plugs directly into that system, making the surface area even easier to expand. Teams that already struggled to protect time for product discovery now have even less organizational patience for it, because leadership can see how fast specs and prototypes appear and reasonably asks: “why can’t we just go faster?”

Still using a timeline roadmap to communicate strategy? The Now-Next-Later roadmap was built to keep teams focused on outcomes, not output theater. Learn why we invented it.

Delayed Gratification as a Competitive Advantage

Speed has become the default metric for evaluating product capability. The faster a team moves from idea to shipped feature, the more effective it’s considered. AI has accelerated this further, making it possible to collapse what used to take weeks into days. And yet the organizations producing the best outcomes are the ones that deliberately slow down at the front of the process.

Why Slowing Down at the Front Creates Speed at the Back

There’s a paradox in product development that’s worth naming explicitly. Teams that invest more time in problem understanding before committing to a solution tend to ship fewer things, but the things they ship are more likely to move the metrics that matter. The total cycle time from “we identified an opportunity” to “we produced a measurable outcome” is often shorter for these teams, because they spend less time building things that don’t work, less time reworking solutions that missed the mark, and less time in post-launch firefighting.

This is the principle behind the Now-Next-Later roadmap. The format exists because it forces teams to separate what they’re confident enough to work on now from what still requires learning. Items in “Later” are deliberately vague, because pretending to know the answer before you’ve done the research is dishonest and wasteful. Items move forward only when the evidence justifies commitment.

AI fits beautifully into this model when it’s deployed in the right place. Using AI to accelerate research synthesis, to surface patterns in feedback data, to generate hypotheses for testing: all of this amplifies good product practice. The problem arises when AI is used to skip the stages where learning happens and jump directly to specifying and shipping.

The Organizational Courage Problem

Delayed gratification requires organizational courage. A Product leader who tells the executive team “we’re spending this quarter deepening our understanding of the problem before we commit to a solution” needs a level of trust and credibility that takes time to build. In organizations where PMs arealready struggling with decision confidence, AI offers an easier path: generate outputs fast, show progress on the roadmap, and deal with the consequences later.

The organizations that treat problem understanding as a competitive advantage tend to share certain characteristics. Product teams are funded as long-lived value streams, not project-based delivery squads. OKRs describe customer outcomes, not feature delivery. PMs get explicit permission (and time) to run experiments that might not produce a shippable result. And critically, their leadership has learned to read a roadmap that shows learning in progress, not just features in motion.

What Leaders Must Change in Incentives and Expectations

The operating model shift this requires isn’t about tools. It’s about what leadership asks for, what leadership measures, and what leadership rewards. Product teams will optimize for whatever the system incentivizes, and right now most systems incentivize exactly the wrong things for an AI-augmented world.

Stop Rewarding Throughput

The single most damaging incentive in modern product organizations is rewarding throughput. When PMs are evaluated on how many features they ship, how many specs they write, or how many items move across the board in a quarter, the message is clear: motion matters more than direction.

In an AI world, this incentive becomes actively destructive. A PM can generate a quarter’s worth of specs in a week. They can populate a roadmap that looks ambitious and well-planned. Leadership reviews it, sees volume, and gives a thumbs up. But nothing on that roadmap was validated. Nothing was tested. Nothing connects to a measurable customer outcome. The roadmap has become what Rich Mironov would call a political document rather than a strategic one.

Changing this requires leadership to redefine what good looks like. A strong quarter for a Product team might include shipping two things instead of ten, if those two things produced measurable impact. It might include killing three initiatives that didn’t survive validation, because the team learned something valuable and redirected resources to higher-value work. Or it might include a research insight that changes the company’s understanding of its market.

Measure Learning Velocity, Not Delivery Velocity

The shift from delivery velocity to learning velocity is one of the most important transitions a product organization can make. Delivery velocity asks: “how fast are we turning ideas into shipped features?” Learning velocity asks: “how fast are we generating validated insights about our customers and market?”

Both matter. But in an organization that has AI accelerating delivery, learning velocity becomes the bottleneck and the differentiator. Two companies with the same AI tooling and the same delivery speed will produce very different results if one of them is learning faster.

Measuring learning velocity means tracking things like: how many experiments ran this quarter, how many hypotheses were validated or invalidated, how quickly the team moved from a new customer insight to a testable bet, and how many times a roadmap initiative was refined based on new evidence before being committed to delivery.ProdPad was designed around this principle. Every idea carries its hypothesis. Every initiative connects to an objective. The workflow tracks progress from discovery through delivery and into outcome measurement. The tool makes it structurally difficult to ship something without articulating why you believe it will work and how you’ll know if it did.

Ditch the feature factory. Learn how to write OKRs that connect your roadmap to customer outcomes and give your team a real measure of progress.

Redefine the PM Role for an AI-Augmented World

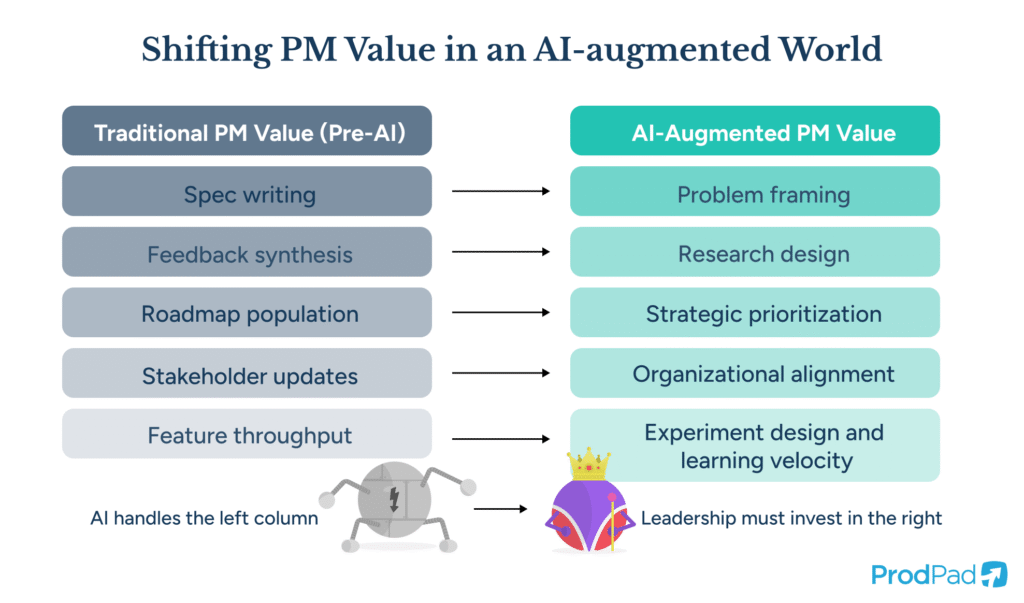

There’s a temptation to treat AI as a productivity multiplier for PMs, letting them do the same job in less time. The better frame is to treat AI as a capability that changes what the PM job is.

If AI can write specs, synthesize feedback, and draft user stories, then the PM’s value shifts decisively toward the work AI cannot do: framing problems well, building organizational alignment around which problems matter most, designing experiments that generate genuine learning, and making judgment calls about when to continue investing in an initiative and when to stop.

This reframing has implications for hiring, performance evaluation, and career development. The PM who can generate the most output is no longer the most valuable PM. The PM who can frame the most insightful problem, design the most effective experiment, and make the most courageous prioritization call is.

Product leaders who understand this will restructure their teams accordingly. Investment in research capability comes first. Discovery time gets protected. Killing work that produces no evidence of value becomes normal. And AI gets deployed exactly where it’s most powerful: accelerating the parts of the process that benefit from speed, while protecting the parts that benefit from depth.

The Competitive Moat Is Judgment, Not Speed

Every product organization now has access to roughly the same AI capabilities. The tooling is broadly available. The productivity gains from generating specs, summaries, and prototypes are accessible to anyone willing to adopt the tools. Speed, in other words, is no longer a differentiator. Everyone is fast.

What differentiates organizations in this environment is the quality of their judgment. The ability to identify the right problems. The discipline to validate before committing. The courage to invest in learning even when it delays visible output. The structural commitment to connecting feedback to ideas to roadmap to outcomes in a way that creates institutional learning, not just institutional motion.

David Pereira’s framing of this is useful: the question isn’t whether to use AI, but whether your organization is capable of directing AI toward problems that matter. If problem framing is weak, AI accelerates waste. If problem framing is strong, AI accelerates impact. The tool is neutral. The operating model determines the result.

For senior Product leaders and founders reading this, the implication is concrete. Audit your incentives. Look at what your organization actually rewards, celebrates, and promotes. If the answer is volume of output, you’re building a system that will use AI to produce more of the wrong things, faster. If you can shift that answer toward validated learning and measurable customer outcomes, AI becomes genuinely transformative.

The teams that win the next five years won’t be the ones that shipped the most features. They’ll be the ones that understood the most problems and solved the ones that mattered.